AI-Powered Daily Podcast Pipeline

The Problem

Staying current with fast-moving tech news takes time that most people don't have. Existing podcast and news digest tools either rely on cloud services, require manual curation, or produce flat, monotonous audio that's hard to stay engaged with. The goal was to build something that runs automatically every morning, costs nothing to operate, and actually sounds like something worth listening to.

The Solution

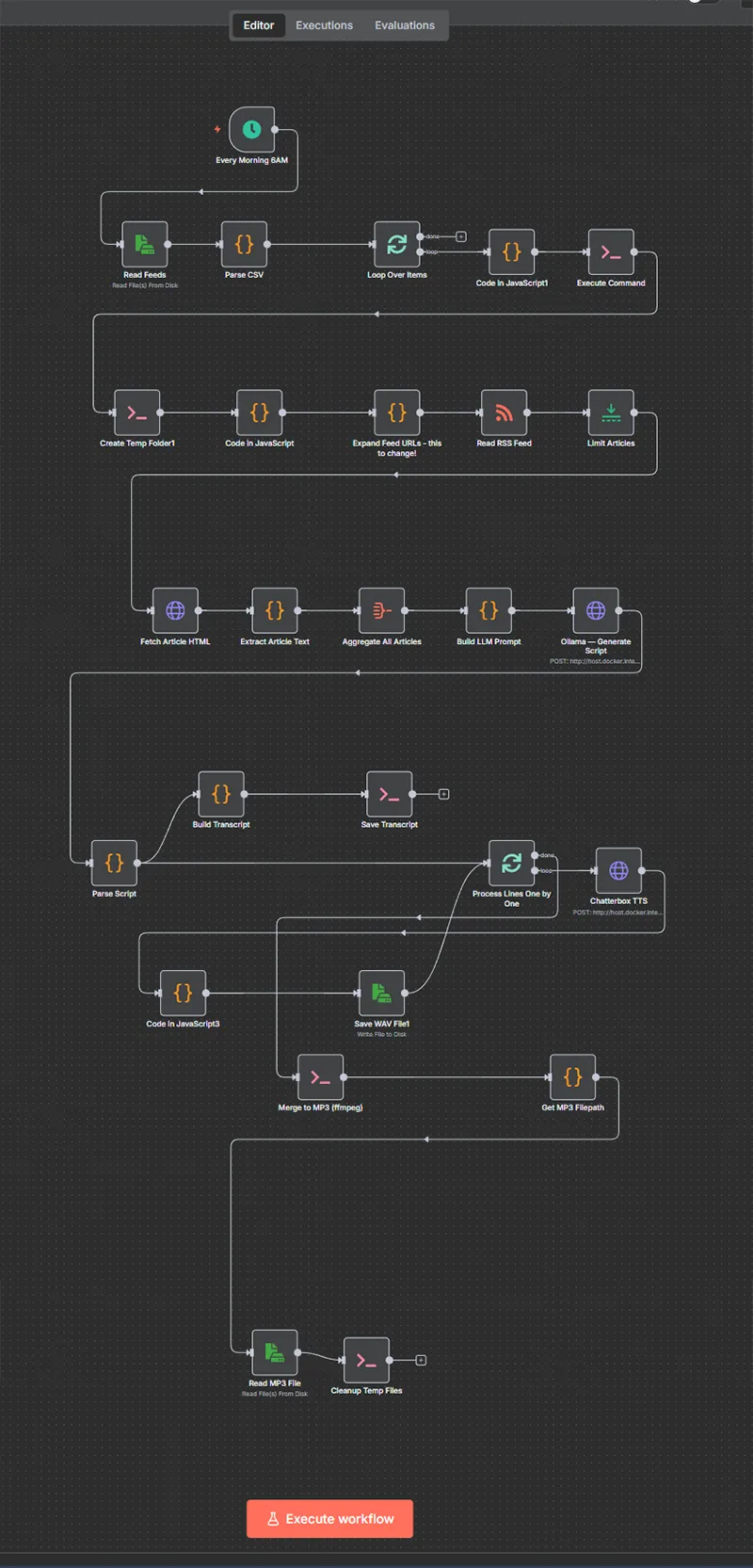

I designed and built an end-to-end automation pipeline using n8n that pulls from configurable RSS feeds, generates a two-host conversation script using a local LLM, converts it to speech with two distinct voices, and delivers the finished episode to a self-hosted podcast library. Every component runs locally - no cloud APIs, no subscription fees, no data leaving the machine. The system is organised by topic, so different feed categories each produce their own daily episode.

The Impact

The result is a fully hands-off morning podcast that arrives in Audiobookshelf each day, ready to listen to during a commute or workout. It replaced a daily habit of manually skimming multiple news sites with something passive and genuinely enjoyable.

The pipeline handles the full production chain: RSS ingestion, article extraction and summarisation, LLM script generation in a dialogue format, per-line text-to-speech synthesis with separate male and female voices, audio merging with proper ID3 metadata, and automatic library delivery. Feed sources and topics are managed through a simple CSV file, making it straightforward to add or remove sources without touching the workflow.

On the technical side, the stack runs entirely in Docker - n8n for orchestration, Ollama serving a local Mistral model for script generation, Kokoro FastAPI for GPU-accelerated TTS, and ffmpeg for audio processing. Considerable work went into prompt engineering to get the LLM to produce clean, structured JSON output consistently, and into building resilient parsing that handles the quirks of locally-run models. The n8n workflow uses a topic loop with a SplitInBatches pattern to process multiple feed categories in sequence, each producing its own dated episode folder and MP3 file.